In the mid-90s, Sybase rolled out its new database. It was a great leap forward in performance and they pushed it like crazy. Sybase’s claims were justified, but it was a new way to look at databases and Sybase loudly announced how different it was from what people were used to using. Oops. They sold almost none of it and hit a financial wall and they never quite recovered.

That came to mind during yesterday’s BBBT presentation by Actian. Their technology foundation goes back to Ingres and that means they’ve been in the database market a long time. The question is whether or not they’ve learned from past case studies.

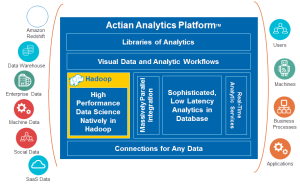

The presenters were John Santaferraro, VP of Solution and Product Marketing, and Emma McGrattan, SVP Engineering. They gave a great technical overview of Actian’s offerings. Put simply, they’re providing a platform for Big Data access. At the core is Hadoop, but they’ve taken their deep understanding of RDBMS technology and incorporated SQL access. That clearly opens up two things:

- Better access to partners for ETL and analytics

- The ability for the mass of business analysts to get at Hadoop data to more easily perform their jobs.

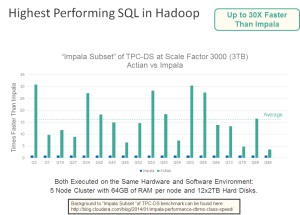

That’s a great thing and I’ll discuss later whether they’re taking that technology to the right markets. Before that, however, I should point out the main competitive point they repeatedly hit on. TPC benchmarks are public, so they went out and compared themselves to who they consider, rightly, to be their main competition: Cloudera Impala. Their results are seen in the chart below.

Actian’s TPC-DS comparison with Cloudera Impala

They returned to this time and time again. On the other hand, they discussed the full platform intelligently but only briefly.

They also covered more of the technology, and there’s a lot of it. As a Computer Associates company, they grow by acquisition. It’s not just a renamed Ingres, but has acquired, VectorWise, Versant, Pervasive and ParAcell. Many companies have had trouble acquiring and integrating firms, but the initial descriptions seem to be showing a consolidated platform.

One caveat: We had no demo. The explanation was the Hadoop Summit demo went so well that they’re in the middle of moving it to a new server and IT didn’t give a heads up. Believable, but again I personally am not too worried. As a former field guy, I know how little emphasis to put into a short demo.

So what did I think was the key technology, if not performance? That’s next.

Hadoop meets SQL

To folks focused on the largest data sets and others, as in car ownership, who like speed for the pure sake of it, the performance is impressive. To me, that’s not the key. Rather, it’s the ability to bridge the Hadoop-SQL divide. As John Santaferraro pointed out, orders of magnitude more business analysts and business users know SQL than know MapReduce and the related underpinnings of Hadoop.

Actian platform

While other Big Data companies have been building bridges to ETL, data cleansing, analytics and other tools in the ecosystem, custom work to do that is time consuming. Opening the ability to use standard, existing SQL tools means you can more quickly build a stronger ecosystem.

Why does that matter?

What is the market

During the presentation, the Actian team was asked about their sweet spot. Is it folks already playing with Hadoop who want better access to enterprise data or is it companies who’ve heard about Hadoop but haven’t stepped in yet to try because of all the questions. Their answer was the first group. I think that’s wrong, however, I understand why they are

Another statement from John was that they are in Silicon Valley and everyone there thinks everyone uses Hadoop because everyone there does. He admitted that’s not true out of the small region. However, sometimes it’s hard to fight the difference between what you intellectually know and what you’re used to. I’ve seen it in multiple companies, and I think it’s happening here.

The mass of global businesses haven’t yet touched Hadoop. It’s very different from what the typically overburdened and underfunded IT organization does, and that much change is scary. Silicon Valley is full of early adopters, it attracts them. In addition, there are plenty of early adopters out there for the picking. However, there are now a lot of vendors in the BI and big data spaces and we’re getting close to a tipping point. The company that figures out how to cross the chasm first is the one who will make it big.

It’s not pure performance that will attract the mass market, it’s how to get the advantages of big data in the most affordable way with the easiest transition path. It’s the ability to quickly leverage existing IT infrastructure and to join it with the newest technology.

Once again, it’s evolution rather than revolution that will win the day.

Summary

From what I saw of the platform, it’s a great start. The issue I see is the focus on the wrong market. The technology will always be important, but though it’s critical it only exists to solve the business problems. Actian seems to have a good handle on the technology and are on a path to integrate and leverage all the acquisitions into a solid platform, but will they be able to explain why that matters to the right market?

There is hope for that. One thing discussed is that their ability to bridge SQL and Hadoop means they are working on building partnerships with major vendors to extend their ecosystem. If they focus on that, they have a great chance of being very successful and being the company that brings Hadoop to the wider IT market.

Twitter: @actiancorp, @santaferraro & @emmakmcgrattan