My latest Tech Target article is up at http://searchdatamanagement.techtarget.com/tip/SQL-vs-NoSQL-database-design-debate-isnt-even-a-real-fight.

BI and the Eisenhower Matrix

It wasn’t my chosen title but the author was trying to make a play on words with Ike, so move past it and read my latest article on Tech Target.

Technology and Disruption

Late last year, I read an article in the Harvard Business Review on disruptive innovation. I thought it needed correction and decided to post my response on LinkedIn.

TDWI & Teradata: An overview of data-centric security

Yesterday’s TDWI webinar was focused on data-centric security. The tag team was Fern Halper, Research Director for Advanced Analytics, TDWI, and Jay Irwin, Director of InfoSec, Teradata. It’s always nice when the two halves of a sponsored presentation fit well. For that reason and for the content, this was a nice presentation.

Everyone in the industry knows that data breeches happen, and we all talk about the issue. I’ve seen a few articles and lists about the number of successful attacks, but Fern Halper pointed us to a nice graphic from Information is Beautiful. She also pointed to another study that showed that “In 2013, 33% of respondents said their company had a data breach. In 2014 the percentage has increased to 43%.” It’s always a race between black hats and white hats, so it’s important to minimize not only your chance of getting hacked, but also to minimize the importance and usefulness of data gained from successful hacks.

Ms. Halper than discussed four types of data security:

- Perimeter security: monitoring network access for intrusion detection.

- Authorization and Access: Password and role based data protections.

- Encryption: Using cryptography to encode data.

- Logging and monitoring: Analyzing access patterns.

Each part is necessary but insufficient. Authorization is only as strong as people’s passwords. If it’s easy to steal the encryption key, encryption doesn’t matter. A robust security system leverages all the types.

One important note: Later in her presentation and throughout Jay Irwin’s section, encryption didn’t exist alone but alongside tokenization. The later is a different security technology, where characters, words, numbers and fields are replaced with other symbols, or tokens, that still look as if they’re real and can still be used in analysis. Mr. Irwin pointed out he prefers “data protection” as a rubric that covers all the techniques of data level security.

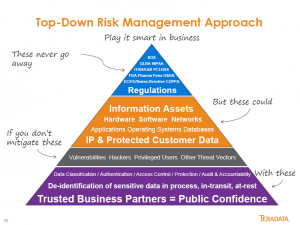

Along with that clarification, Jay Irwin also described the multiple layers as “Defense in Depth,” a concentric ring of security to ensure there’s no single point of failure. Jay also provided my favorite slide of the presentation. While it’s too wordy, it’s a pretty clear view of Teradata’s top-down approach.

An organization must start with understanding the rules and regulations that drive data security. Only then can you identify the data assets that need special attention in order to protect them from hackers.

Jay has a lot more to say in a lot more detail, and I won’t cover it all. While I blog about webinars so you don’t have to watch, this one’s an exception. If you want to get a good, broad view of core data security issues, take some time and listen to the webinar.

TDWI Webinar — Engaging the Business, again from the technologist’s perspective

This week’s TDWI hosted webinar was about engaging business and, once again, it came from the standpoint of technologists rather than from business. There were some very good things said. However, until our industry stops thinking of business knowledge workers as children to be tutored and begins to think about them as people whose knowledge is the core of what we must encapsulate, we’ll continue to miss the mark and adoption of solutions will remain slow.

The main presenter was David Loshin, President of Knowledge Integrity. He began the presentation with a slide that describes his view of the definition of “data driven,” including three main points:

- Focus on turning data into actionable knowledge that can lead to increased corporate value.

- Aware of variance that can cause inconsistent interpretation.

- Coordination among data consumers to enforce standards for utilization.

We should all clearly understand that the first item is not new and was not created by the business intelligence (BI) industry. Business has always been data driven. What we’re able to do now is access far more data than ever before so that we can provide a more robust view of the corporation.

Inconsistent Data v Inconsistent Utilization

The second bullet is a core point. Mr. Loshin used a couple of example such as sales territory and other areas where definitions are fuzzy. One clear difference to me is one I directly experienced 25 years ago, and more directly addresses the visualization side of the BI conundrum. I was working for a major systems integrator (SI) and my client was, well, let’s just say it was a large, fruit based computing company.

A different SI had created an inventory system for the client’s manufacturing facility but the system was a failure though all the right data was in the system. The problem was that the reports were great for the accounting department, not for inventory and manufacturing. We interviewed the inventory team and then rewrote reports to address and present the information from their standpoint.

Too often, technologists get lost in the detailed data definitions and matching fields across data sources. That is critical, but it loses the big picture. Even when data is matched, different business people use data differently.

Which brings us to David Loshin’s third point. No, we don’t need to enforce exact standards for utilization. We need to ensure that the data each consumer refers to is consistent, but we must do a better job in understanding that different departments can utilize the exact same data in a variety of ways.

Business Drivers and Data Governance

David did get to the key issue a bit later, on a slide titled Operationalizing Business Policies. He points out that it’s critical to ensure that “Information policies model the data requirements for business policy.” This is key and should be bubbled up higher in the mindset of our industry. While I hear it mentioned often, it seems to be honored more in the breach.

Time was spent discussing the importance of understanding different users and their varying utilization of data. As I mentioned in the introduction, the solution to the new complexities then veers from addressing business needs to ignoring history. In a previous blog post, I discussed how many in the industry seem to be ignoring the lessons to be learned from the advent of the PC. Mr. Loshin seems to be doing that when he talks about empowering the business users to set their own usability rules. He splits IT and business in the following way:

- Business data consumers are accountable for the rules asserting usability for their views of the data.

- IT becomes responsible for managing the infrastructure that empowers the business user.

The issue I have with that argument is a phrase that didn’t appear in this webinar until Linda Briggs, the moderator, mentioned it in a poll question right before Q&A: Data Governance. Corporations are increasingly liable for how they control and manage information. It does not make sense to allow each user to define their own data needs in a void. Rather than allow for massively expanded and relatively uncontrolled access to data and then later have to contract access, as corporations had to regain a handle on what was being done on scattered desktop computers, BI vendors should be positioning data governance from the start.

Whether it’s by executive fiat, a cross-functional team, or some other method, companies need to clarify data governance rules. Often, IT is the best intermediary between groups, actively participating in data governance definition as an impartial observer and facilitator. It is then the job of IT to ensure that it provides as open access as possible to business workers given their needs and the necessity of following governance rules.

There was one question, during Q&A, on the importance of data governance. I thought David Loshin again understated its importance while Harald Smith, Director of Product Management at Trillium, the webinar sponsor, had the comment that “everyone is responsible for data governance.” That is my only mention of the sponsor, as I felt his portion of the presentation was a recitation of sound bites, talking points and buzz words that didn’t provide any value to the hour.

Summary

David Loshin has a clear view of engaging the business and gets a number of key things correct. However, that view is one of a technologist looking over a self-imagined bridge separating technology and business. There’s not a bridge separating IT and business. They overlap in many critical areas and both must learn from and work well with each other.

Yellowfin DashXML Webinar: Good new feature, not so good launch

The launch of Yellowfin DashXML included a round of global webinars mid-week. Well, not “included,” it’s more that the webinar was the entire launch. The new product feature is useful, but as I’m a marketing person I do have to question how they’ve handles the launch.

Yellowfin, as with many business intelligence (BI) vendors, is focused on visualization, providing business knowledge workers the ability to easily see information. The presentation was by John Ryan, Director of Product Marketing, and Teresa Pringle, Product Specialist. As is obvious from the title of the webinar, it was to announce the availability of the first version of DashXML, a utility within Yellowfin that allows easy integration of custom XML into dashboards and reports.

While they do sell directly to IT organizations who provide their interface to their corporate users, they also have a strong OEM business. As Mr. Ryan pointed out, “Embedding BI is a large chunk of Yellowfin’s business.” While direct label clients also want to customize user interfaces, DashXML seems much more valuable to the OEM customer base, providing an easier way to integrate standards from existing applications in order to have a more consistent interface.

The key word in that last sentence was “easier,” not “easy,” and that’s just fine for what is needed. This is XML. As Ms. Pringle explained, programmers will need to be very familiar with CSS manipulation and also with Java Script. DashXML is there to assist developers in providing customized visualizations, it is not for end users. The feature is available with a server license, providing deployment capability, and with a developer license for investigating the feature. It is not available as part of the per-user, distribution license for end users.

DashXML adds power and flexibility to Yellowfin’s offering and will better help its clients customize visualizations.

A Very Quiet Launch

As much as the presenters seemed to be working to imply DashXML is a new product, it’s really a feature of their platform. While the title of the webinar was a launch, nothing in the presentation or on their site implies it’s really a launch.

Almost the entire presentation was about the existing Yellowfin offering. Teresa Pringle’s “demo” portion of the webinar started with a whole lot of customized interfaces and only spent a few minutes showing the DashXML features in design and only for a single report in a dashboard. You could get the idea that it would make things easier, but it was also clear that’s all it did. There’s nothing really new, nothing that Yellowfin clients aren’t doing now, it’s a way to save time and money. Mind you, those are very valuable things, but the presentation didn’t focus on any ROI those savings might present.

What’s more intriguing is that they held a webinar, yet their site doesn’t reflect that knowledge. As of the writing of this blog entry (24 hours after the webinar), a few things seem to be missing:

- No DashXML item in their home page rotating banner.

- No DashXML mentioned on the rest of the home page.

- No DashXML item on their news/blog page.

- No DashXML added to their site menu, even though John presented a slide that implied DashXML was on the same level as their platform and web services offerings.

If the feature isn’t important enough to discuss on the web site, why have a webinar? After all, the purpose of a webinar is to drive interest in the product and one of the key follow ups for webinars should also be gaining information on your site to hopefully drive customer tracking and contact information as lead qualification.

DashXML is a nice addition that can help IT and OEM developers blend point-and-click development and coding to provide a customized visualization interfaces with better ROI. However, a week webinar and no content is neither a silent launch nor a strong one. Sadly, the marketing doesn’t rise to the quality of the product enhancement.

TDWI Webinar: Innovations and Evolutions in BI, Analytics, and Data Warehousing

TDWI held a webinar to announce their latest major report. While there are always a lot of intriguing numbers in the reports, it’s also important to remember the TDWI audience is self-selecting. People interested in the latest information lean towards the leading edge so their numbers should be taken as higher than would be in the general IT market place. Still, the numbers as they change over time are valuable and the views of the analysts are often worth hearing.

As the webinar was pushing a major report, the full tag team was in attendance: David Stodder, TDWI Director for BI, Fern Halper, TDWI Director for Analytics, and Philip Russom, TDWI Director for Data Management.

David Stodder presented his section first, and one important point he made had nothing to do with numbers. He briefly discussed one quote and user story and it was from a government employee. Companies using Hadoop to better understand internet business and relationships tend to get almost all the press, but David pointed out the importance of data and analytics in helping governments better address the needs of their citizens.

A very intriguing set of numbers David provided was on how many responders were on current versions of software versus older versions. While you can see that some areas are more quickly adopting the SaaS model, that’s not the key the he pointed out. Only 27% of respondents said they’re on the current version of their data security software. A later slide shows that security is one reason for hesitation in the move to mobile, but Mr. Stodder rightly points out that underlying all the information channels is the basis of data security. It’s not a question of if you’ll get hacked but when, so data security should be kept updated.

The presentation was then turned over to Fern Halper. I look a bit askance at the claim that the Internet of Things (IoT) is a “trend.” Her data shows only 18% taking advantage of it today and 40% might be using in within three years. We’ve been talking about IoT for a while, and it’s clearly being slowly integrated into business, I wouldn’t say it’s as fashionable as the word trend would imply.

On the more useful side is the table she showed that’s simply titles “Analytics hits mainstream.” It not only shows that massive adoption of the last decade’s focus on dashboards and BI tools, but around 30% of respondents are using many of the newer tools and techniques and the next three years indicate a doubling in usage.

Philip Russom gave the final segment of the presentation. His first slide on the adoption of newer technologies for data warehousing showed something that many have finally admitted in the last year or no: No-SQL is an excuse made by people who don’t understand how business technology works. While the numbers show 28% of respondents using Hadoop, it also shows 22% using SQL on Hadoop. The number over the next three years are even more interesting: 36% say they’ll be using Hadoop and 38% will be using SQL on Hadoop. That means existing No-SQL folks will be moving to SQL.

The presentation ended with the team of analysts presenting their list of ten priorities for those people interested in emerging technologies. To me, the first isn’t the first among equals, it is set far above all the rest: Adopt them for their business benefits. All the other nine items are how IT addresses the challenges of new technologies, but those things are useless unless you understand how technologies will support business. Without that, you can’t provide an ROI and you can’t get business stakeholders to support you for long. That’s strategy, all the other points are just tactics.

As usual, get the report and browse it.

TDWI Webinar Review: Fast Decision Making with Analytics

This is more of a marketing flavored post as the recent presentation seemed to miss its own point. The title implied it was about fast decision making, but Fern Halper, TDWI Research Director for Advanced Analytics, gave a rather generic presentation about the importance of operationalizing analytics.

Fern gave a nice presentation about operationalizing analytics, but it was not significantly different than her last few. In addition, some of the survey issues discussed were clearly not well thought out. For instance, Ms. Halper listed the expected growth of predictive analytics and web/mobile analytics as if they belonged in the same discussion. The fact that web and mobile are methods of display doesn’t overlap with whether they are used to display descriptive or prescriptive analytics. The growth of those display methods also don’t move away from the use of dashboards in CRM and ERP applications, as was implied, since those applications will migrate views to the new display methods.

The best thing mentioned by both Fern Halper and the SAP presenters was the fact that there were multiple references to that need for multiple data sources. Seeing the continued refocusing of many firms on wide data rather than big data is a good thing for the industry. Big data is more of a technical issue while wide data more directly addresses complex business environments.

Now I’m hoping for more people to begin to refer to loosely structured data rather than unstructured data. Linguists, I’m sure, are constantly amused at hearing languages referred to as unstructured.

The case study was by Raj Rathee, Director, Product Management, SAP. It was an interesting project at Lufthansa, where real-time analytics were used to track flight paths and suggest alternative routes based on weather and other issues. The business key is that costs were displayed for alternate routes, helping the decision makers integrate cost and other issues as situations occur. However, that was really the only discussion of fast decision making with analytics.

The final marketing note is that the Q&A was canned but the answers didn’t always sync up. For instance, the moderator asked one question of Fern, she had a good answer, but there was no slide in the pack about her response, just the canned SAP slide referenced by Ashish Sahu, Director, Product Marketing, SAP, after Ms. Halper spoke.

I think the problem was that the presenters didn’t focus down on a tight enough message and tried to dump too much information into the presentation. The message got lost.

DBTA Webinar: Cloud Data Warehousing Simplified

A recent DBTA webinar was on how the data warehouse is still with us. It was by Sarah Maston, Developer Advocate, IBM Cloud Services. Simply put, it was a pitch for IBM and how their data warehousing solutions can help people more easily move to the cloud. Sarah was very knowledgeable, but she’s one of the smart folks I do suggest gets a class in presentation skills. IBM must have them and it would help her be even more powerful in her talks.

The core of the presentation was talking about how dashDB, IBM’s columnar, MPP database is perfect for data warehousing and how you can easily move information to it. Being at IBM, she had no hesitation talking about the big, visible name in Cloud: Amazon. Her claim is that IBM Cloudant is a much more powerful and agile tool for loading dashDB than is Amazon DynamoDB for Amazon Redshift. From my decades of high tech, I can believe it. IBM’s challenge is going to be whether or not they can communicate to the SMB market in ways they want to hear. That’s been a regular challenge for IBM.

One of the most interesting things Ms. Maston discussed was how to get information from systems into the data warehouse. A she said, in reference to IBM Bluemix, “meet the ODS.” I’ve previously said similar things and think it’s important to not forget the importance of the operational data store.

Data warehousing is not going away, it’s evolving. So too is the ODS. IBM is a company that often looks ahead very clearly but then sometimes misses the messaging. From the presentation, I see all the pieces are there, it’s early and they’ll grow, but it remains to be seen if they’ll learn how to address the market properly to get a major chunk of the business at which they’re aiming.

Review of Brightalk Webinar: 5 Biggest Trends in B2B Lead Generation

This week a Brighttalk webinar based on a study driven by Holger Schulze occurred. As he is the founder of the B2B Technology Marketing Community on LinkedIn, it should be no surprise that the topic was a discussion of his recently released report on B2B lead generation. The presentation was a great panel discussion, with Mr. Schulze picking out portions of the report and then the panel providing feedback. The panelists and their firms were:

- Dallas Jessup, BrightTALK.

- DeAnn Poe, DiscoverOrg.

- Ben Swinney, Entrust Datacard.

- Dale Underwood, LeadLifter.

- Sue Yanovitch, IDG Enterprise.

The survey was done via the same LinkedIn group, so it’s a bit self-selected, but the results are still interesting. The top five trends are:

- Increasing the quality of leads is the most important issue.

- The same issue of quality of leads is also listed as the major challenge.

- Lack of resources is the main obstacle.

- Lead generation budgets are starting to increase.

- Despite the hype, mobile lead generation still isn’t big in B2B.

I’m a blend of data and intuition driven, so it’s nice to see what I’d expect backed up by numbers. However, in the stretch to get a list of five, the first two seem redundant.

68% of the respondents mention lead as a priority. The first thing pointed out was by Ben Swinney, who was surprised that “Improve the sales/marketing alignment” was down at number four. Ben was the one customer in a group of vendors and that opinion has a lot of weight.

Fortunately for the vendors, he wasn’t in a void. The rest of the panel kept coming back to the importance of both marketing and sales working closely in order to ensure leads were recognized the same way and had consistent treatment. I agree that is necessary for improving lead quality and if there’s one key point to take from the presentation, it is improving that relationship.

Another intriguing piece of information is the return of the prioritization of conferences, had dropped to third last year and are back up to number one. Sue Yanovitch was happy, as I’m sure all IDG folks are, and pointed out their research shows that tech decision makers value their peers and so sharing information in such forums are valuable. Enterprise sales often need to lead with success stories, because most companies don’t wish to be “bleeding edge.” Conferences are always great forum for getting not only improved understanding of technology but also for people so see how others with similar backgrounds are proceeding. The increase in budgets as the markets continue to recover leads to the return of attendance to such forums.

A key point in the proper handling of leads was brought up by Dale Underwood. His company’s research shows that people have completed 60-70% of their research before there’s a formal lead request put in to a prospective vendor. That implies both better tracking and handling of touch points such as web visits, but also means that sales needs to be better informed about those previous touch points. If not, sales can’t properly prepare for that first call.

That leads into another major point. Too many technology marketing people get as enamored of product as do the founders and developers. As Dallas Jessup rightly pointed out, lead generation techniques should focus on the prospect not on the vendor. What pains are the market trying to solve? That leads to the right calls to action.

To wrap back around to the sale and marketing issue, the final point to mention came during Q&A, with a simple question of whether turning leads into customers is marketing’s or sales’ responsibility. That should never have been a question, but rather how the two achieve it would have been better.

Sue began the reply by pointing out the obvious answer of “both,” though she should have said it proudly rather than saying it was a cop-out. All the other panelists chimed in with strong support that it has to be an integrated effort, the full circle lead tracking must happen.