Our presenters last Friday at the BBBT were Brett Sheppard and Manish Jiandani from Splunk. The company was founded on understanding machine data and the presentation was full of that phrase and focus. However, machine data has a specific meaning and that’s not what Splunk does today. They speak about operational intelligence but the message needs to bubble up and take over.

Splunk has been public since 2012 and has over 1200 employees, something not many people realize. They were founded in 2004 to address the growing amount of machine data and the main goal the presenters showed is to “Make machine data accessible, usable and valuable to everyone.”

However, their presentation focused on Splunk’s ability to access IVR (Interactive Voice Recorder) and twitter transcripts and that’s not machine data. When questioned, they pointed out that they don’t do semantic analysis but focus on the timestamp and other machine generated data to understand operational flow. Still, while you might stretch and call that machine data, they did display doing some very simple analytics on the occurrence of keywords in text and that’s not it.

It’s clear that Splunk has successfully moved past pure machine data into a more robust operational intelligence solution. However, being techies from the Bay Area, it seems they still have their focus on the technology and its origins. They’re now pulling information from sources other than just machines, but are primarily analyzing the context of that information. As Suzanne Hoffman (@revenuemaven), another BBBT member analyst, pointed out during the presentation, they’re focused on the metadata associated with operational data and how to use that metadata to better understand operational processes.

Their demo was typical, nothing great but all the pieces there. The visualizations are simple and clear while they claim to be accessible to BI vendors for better analytics. However, note that they have a proprietary database and provide access through ODBC and an API. Mileage may vary.

There was also a confusing message in the claim that they’re not optimized for structured data. Machine data is structured. While it often doesn’t have clear field boundaries, there’s a clear structure and simple parsing lets you know what the fields and data are in the stream. What they really mean is it’s not optimal for RDBMS data. They suggest that you integrate Splunk and relational data downstream via a BI tool. That makes sense, but again they need to clarify and expose that information in a better way.

And then there’s the messaging nit. While talking about business as my main focus, technology presented with the incorrect words jars the educated audience. Splunk is not the first company nor will it, sadly, be the last, to have people who are confused about the difference between “premise” and “premises.” However, usually it’s only one person in a presentation. The slides and both presenters showed a corporate confusion that leads me to the premise that they’re not aware of how to properly present the difference between Cloud and on-premises solutions.

Hunk: On the Hadoop Bandwagon

Another messaging issue was the repeated mention of Hunk without an explanation. Only later in the presentation, they focused on it. Hunk’s their product to put the Splunk Enterprise technology on a Hadoop database. Let me be clear, it’s not just accessing Hadoop information for analysis but moving the storage from their proprietary system to Hadoop.

This is a smart move and helps address those customers who are heavily invested in Hadoop and, at least at the presentation level, they have a strong message about having the same functionality as in their core product, just residing on a different technology.

Note that this is not just helping the customer, it helps Splunk scale their own database in order to reach a wider range of customers. It’s a smart business move.

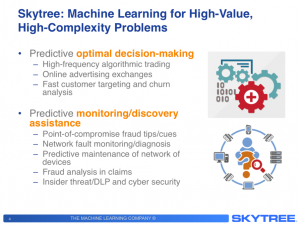

Security, Call Centers and Changing the Focus

The focus of their business message and a large group of customer slides is, no surprise, on network security and call center performance. The ability to look at the large amount of data and provide analysis of security anomalies means that Splunk is in the Gartner Magic Quadrant for SIEM (Security Information and Event Management).

In addition, IVR was mentioned earlier. That combined with other call center data allows Splunk to provide information that helps companies better understand and improve call center effectiveness. It’s a nice bridge from pure machine data to a more full featured data analysis.

That difference was shown by what I thought was the most enlightening customer slide, one about Tesco. For my primarily US readers, Tesco is a major grocery chain, with divisions focused on everything from the corner market to supermarkets. They are headquartered in England, are the major player in Europe and the second largest retailer by profit after Walmart.

As described, Tesco began using Splunk to analyze network and website performance, focused on the purely machine data concerns for performance. As they saw the benefit of the product to more areas, they expanded to customer revenue, online shopping cart data and other higher level business functions for analysis and improvement.

Summary

Splunk is a robust and growing company focused on providing operational intelligence. Unfortunately, their messaging is lagging their business. They still focus on machine data as the core message because that was their technical and business focus in the last decade. I have no doubts that they’ll keep growing, but a better clarification of their strategy, priorities and messages will help a wider market more quickly understand their benefits.