I’ve probably used this in other columns, but that’s life. MapR’s presentation to the BBBT reminded me of Yogi Berra’s statement that it feels like déjà vu all over again. Wait, if I think I’ve done this before, am I stuck in a déjà vu loop?

The presentation was a tag team effort of Steve Wooledge, VP Product Marketing, and Tomer Shiran, VP Product Management.

The Products and Their Aim

The first part of the déjà vu was good. People love to talk about freeware, but mission critical solution won’t be trusted on such. Even before Linux, before Unix, software came out and it took companies to package it with service and support to provide constancy and trust for widespread IT adoption. MapR is a key company doing that with Apache Hadoop, the primary open source technology for big data applications.

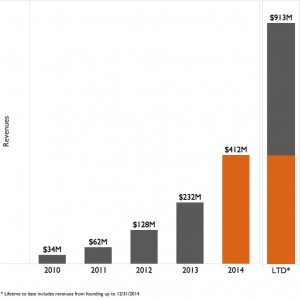

They’ve done the job well, putting together a strong company that, quite reasonably, has attracted some great investors and customers. Of course, because Hadoop is still in its infancy, even a leading company such as MapR only mentions 700 customer, companies paying for licenses; but that’s a statement about big data’s still fairly limited impact in operational systems not a knock on MapR.

Their vision statement is simple: “Empowering the As-it-happens business by speeding up the data-to-action cycle.” Note the key: Hadoop is batch oriented and all the players realize that real-time analysis matters for some key sales and marketing applications. Companies are now focusing on how fast they can get information out of the databases, not what it takes to get data in. A smart move but only half the equation.

One key part of the move to package open source into something trusted was pointed out by Steve Wooledge. When the company polled customers about why they chose MapR, the largest response was availability, the up time of the system. Better performance wasn’t far behind, but it’s clear that the company understands that availability is a critical business issue and they seem to be addressing it well.

Where the déjà vu hits in a not-so-positive way is the regular refrain of technologists still not quite getting business – even when they try. This isn’t a technology problem but an innovator’s problem. When you get so wrapped up in the cool things you’re doing, you think that you need to lead with the cool things, not necessarily what the market wants.

One example was when they were describing the complexity of the MapR packaging. Almost all the focus was on the cool buzzwords of open source. Almost lost in the mix was the mention that their software supports NFS. It was developed more than 30 years ago and helps find files on networks. That MapR helps link both the latest and the still voluminous data in existing file systems is a key point, something that can help businesses understand that Hadoop can be integrated into existing systems and infrastructure. However, it’s not cool so the information is buried.

The final thing I’ll mention about the existing products is that MapR has built a nice three product suite, providing open source, mid-tier and full enterprise versions. That’s the perfect way to address the open source conundrum and move folks along the customer curve.

Apache Drill: Has it Bitten Off Too Much?

Sorry, couldn’t help the drill bit reference. Tomer Shiran took the later part of the presentation to show off Apache’s latest data toy, Apache Drill, intended to bridge the two worlds of data. The problem I saw was one not limited to Tomer, MapR or even Apache, but to all folks with with what they think of as new technology: Over hype and an addiction to revolutionary rather than evolutionary words and messages. There were far too many phrases that denigrated IT and existing technology and implied Drill would replace things that weren’t needed. When questioned, Tomer admitted that it’s a compliment; but the unthinking words of many folks in the industry set out a pattern inimical to rapid adoption into the Global 1000’s critical information paths.

Backing up that was a reply given to one questioner: ““CIO of one of the largest tech companies said they can’t keep doing things the same way.” Tech companies tend to be bleeding edge by nature, they do not represent the fuller business world. More importantly, the idea that a CIO saying she needs to change doesn’t mean the CIO is planning on throwing out existing tools that work. It means she wants to expand and extend in a way to leverage all technology to provide better decision making capabilities to the rest of the CxO suite.

Another area of his talk finally brought forward, through a very robust discussion, of one terminology issue that many are having. Big data folks like to talk about “no schema” but that’s not really true. Even when they modify the statement to be “schema on read” it’s missing the point.

They seem to be confusing fixed layout, relational records with the theory of schemas. XML is a schema for data exchange. It’s very flexible and can be self-defined, but it’s a schema. As it came from SGML, it’s not even the first iteration of flexible schemas. The example Mr. Tomer gave was just like an XML schema. Both data source and data recipient have to know some basic information such as field names in order to make sense of data, so there’s a schema.

Flexible schemas not only aren’t new, they don’t obviate the need for flexible schemas. They’re just another technique for managing the wide variety of data that business wishes to turn into information. As long as big data folks misusing a term and acting as if they have something revolutionary, the longer they’ll retard their needed incursion into IT and business information.

Summary

Hadoop and big data aren’t going anywhere except forward. The question is at what speed. There are some great things happening in both the Apache open source world and MapR’s licensed support for that world, but the lack of understanding of existing IT and business is retarding adoption of the new and exciting technologies.

When statements such as “But the sales guy won’t do X” are used by folks who have never been in and don’t understand sales, they’re missing the market. Today’s sales person is looking for faster and more accurate information, and is using many tools people would have said the same thing about only ten years earlier. In the meantime, sales management and the CxO suite who provide guidance for the sales force are even more interested in big picture information coming from massaging large data sources.

The folks in the new arenas such as Hadoop need to realize that they are complementary to existing technologies and that can help both IT and business. When pointing that out, I was asked by one of the presenters if that meant he should do two case studies, one with Hadoop, flexible schema and one with old line uses, I gave a clear no. It should be one with new and one that shows new and existing data sources combining to give management a more holistic picture than previously possible.

Evolution is good. MapR can help. They need to do the tough part of technology and more their view from what they think is cool to what the market thinks is needed.