ThoughSpot is an analytics company and they made a number of announcements at their conference this week. One that caught my eye was about SearchIQ, a new product for natural language processing (NLP). It layers text and voice query on top of their platform. Read more in my latest article on forbes.com.

Tag Archives: analytics

Yellowfin DashXML Webinar: Good new feature, not so good launch

The launch of Yellowfin DashXML included a round of global webinars mid-week. Well, not “included,” it’s more that the webinar was the entire launch. The new product feature is useful, but as I’m a marketing person I do have to question how they’ve handles the launch.

Yellowfin, as with many business intelligence (BI) vendors, is focused on visualization, providing business knowledge workers the ability to easily see information. The presentation was by John Ryan, Director of Product Marketing, and Teresa Pringle, Product Specialist. As is obvious from the title of the webinar, it was to announce the availability of the first version of DashXML, a utility within Yellowfin that allows easy integration of custom XML into dashboards and reports.

While they do sell directly to IT organizations who provide their interface to their corporate users, they also have a strong OEM business. As Mr. Ryan pointed out, “Embedding BI is a large chunk of Yellowfin’s business.” While direct label clients also want to customize user interfaces, DashXML seems much more valuable to the OEM customer base, providing an easier way to integrate standards from existing applications in order to have a more consistent interface.

The key word in that last sentence was “easier,” not “easy,” and that’s just fine for what is needed. This is XML. As Ms. Pringle explained, programmers will need to be very familiar with CSS manipulation and also with Java Script. DashXML is there to assist developers in providing customized visualizations, it is not for end users. The feature is available with a server license, providing deployment capability, and with a developer license for investigating the feature. It is not available as part of the per-user, distribution license for end users.

DashXML adds power and flexibility to Yellowfin’s offering and will better help its clients customize visualizations.

A Very Quiet Launch

As much as the presenters seemed to be working to imply DashXML is a new product, it’s really a feature of their platform. While the title of the webinar was a launch, nothing in the presentation or on their site implies it’s really a launch.

Almost the entire presentation was about the existing Yellowfin offering. Teresa Pringle’s “demo” portion of the webinar started with a whole lot of customized interfaces and only spent a few minutes showing the DashXML features in design and only for a single report in a dashboard. You could get the idea that it would make things easier, but it was also clear that’s all it did. There’s nothing really new, nothing that Yellowfin clients aren’t doing now, it’s a way to save time and money. Mind you, those are very valuable things, but the presentation didn’t focus on any ROI those savings might present.

What’s more intriguing is that they held a webinar, yet their site doesn’t reflect that knowledge. As of the writing of this blog entry (24 hours after the webinar), a few things seem to be missing:

- No DashXML item in their home page rotating banner.

- No DashXML mentioned on the rest of the home page.

- No DashXML item on their news/blog page.

- No DashXML added to their site menu, even though John presented a slide that implied DashXML was on the same level as their platform and web services offerings.

If the feature isn’t important enough to discuss on the web site, why have a webinar? After all, the purpose of a webinar is to drive interest in the product and one of the key follow ups for webinars should also be gaining information on your site to hopefully drive customer tracking and contact information as lead qualification.

DashXML is a nice addition that can help IT and OEM developers blend point-and-click development and coding to provide a customized visualization interfaces with better ROI. However, a week webinar and no content is neither a silent launch nor a strong one. Sadly, the marketing doesn’t rise to the quality of the product enhancement.

TDWI Webinar Review: Fast Decision Making with Analytics

This is more of a marketing flavored post as the recent presentation seemed to miss its own point. The title implied it was about fast decision making, but Fern Halper, TDWI Research Director for Advanced Analytics, gave a rather generic presentation about the importance of operationalizing analytics.

Fern gave a nice presentation about operationalizing analytics, but it was not significantly different than her last few. In addition, some of the survey issues discussed were clearly not well thought out. For instance, Ms. Halper listed the expected growth of predictive analytics and web/mobile analytics as if they belonged in the same discussion. The fact that web and mobile are methods of display doesn’t overlap with whether they are used to display descriptive or prescriptive analytics. The growth of those display methods also don’t move away from the use of dashboards in CRM and ERP applications, as was implied, since those applications will migrate views to the new display methods.

The best thing mentioned by both Fern Halper and the SAP presenters was the fact that there were multiple references to that need for multiple data sources. Seeing the continued refocusing of many firms on wide data rather than big data is a good thing for the industry. Big data is more of a technical issue while wide data more directly addresses complex business environments.

Now I’m hoping for more people to begin to refer to loosely structured data rather than unstructured data. Linguists, I’m sure, are constantly amused at hearing languages referred to as unstructured.

The case study was by Raj Rathee, Director, Product Management, SAP. It was an interesting project at Lufthansa, where real-time analytics were used to track flight paths and suggest alternative routes based on weather and other issues. The business key is that costs were displayed for alternate routes, helping the decision makers integrate cost and other issues as situations occur. However, that was really the only discussion of fast decision making with analytics.

The final marketing note is that the Q&A was canned but the answers didn’t always sync up. For instance, the moderator asked one question of Fern, she had a good answer, but there was no slide in the pack about her response, just the canned SAP slide referenced by Ashish Sahu, Director, Product Marketing, SAP, after Ms. Halper spoke.

I think the problem was that the presenters didn’t focus down on a tight enough message and tried to dump too much information into the presentation. The message got lost.

SAS: Out of the Statisticians Pocket and into the Business Briefcase

I just saw an amusing presentation by SAS. Amusing because you rarely get two presenters who are both as good at presenting and as knowledgeable about their products. We heard from Mike Frost, Senior Product Manager for Data Management, and Wayne Thompson, Chief Data Scientist. They enjoy what they do and it was contagious.

It was also interesting from the perspective of time. Too many younger folks think if a firm has been around for more than five years, it’s a dinosaur. That’s usually a mistake, but the view lives on. SAS was founded almost 40 years ago, in 1976, and has always focused on analytics. They have been historically aimed at a market that is made up of serious mathematicians doing heavy statistical work. They’re very good at what they do.

The business analysis sector has been focused on less technical, higher level business number crunching and data visualization. In the last decade, computing power has meant firms can dig deeper and can start to provide analysis SAS has been doing for decades. The question is whether or not SAS can rise to the challenge. It’s still early, but the answer seems to be a qualified but strong “yes!”

Both for good and bad, SAS is the largest privately held software company, still driven by founder James Goodnight. That means a good thing in that technically focused folks plow 23% of their revenue into R&D. However, it also leaves a question mark. I’ve worked for other firms long run by founders, one a 25+ year old firm still run by brothers. The best way to refer to the risk is that of a famous public failure: Xerox and the PC. For those who might not understand what I’m saying, read “Fumbling the Future” by Smith and Alexander. The risk comes down to the people in charge knowing the company needs to change but being emotionally wedded to what’s worked for so long.

The presentation to the BBBT shows that, while it’s still early in the change, SAS seems to be mostly avoiding that risk. They’re moving towards a clean, easier to use UI and taking their first steps towards collaboration. More work needs to be done on both fronts, but Mike and Wayne were very open and honest about their understanding the need and SAS continuing to move forward.

One of the key points by Mike Frost is one I’ve also discussed. While they disagree with me and think the data scientist does exist, the SAS message is clear that he doesn’t work in a void. The statistician, the business analyst and business management must all work in concert to match technical solutions to real business information needs.

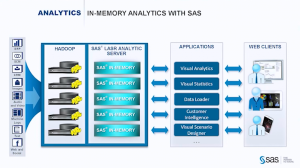

LASR, VAE and a cast of thousands

The focus of the presentation was on SAS LASR, their in-memory analytics server. While it leverages Hadoop, it doesn’t use MapReduce because that involves disk access during processing, losing the speed advantage of in-memory applications.

As Mike Frost pointed out, “It doesn’t do any good to run the right model too late.”

One point that still shows the need to think more about business, is that TCO was mentioned in passing. No slide or strong message supported the message. They’re still a bit too focused on technology, not what sells the business decision makers on business intelligence (BI).

Another issue was the large number of ancillary products in the suite, including Visual Data Explorer, Data Loader and others. The team mentioned that SAS is slowly moving through the products to give them the same interface, but I also hope they’re looking at integrating as much as possible so the users don’t have the annoyance of constantly moving between products.

One nice part of the demo was an example of discussing what SAS has termed “poorly structured data” as opposed to “unstructured data” that’s the rage in Hadoop. I prefer “loosely structured data.” Mike and Wayne showed the ability to parse the incoming file and have machine intelligence make an initial pass at suggesting fields. While this isn’t new, I worked at a company in 2000 that was doing that, it’s a key part of quickly integrating such data into the business environment. The company I reference had another founder who became involved in other things and it died. While I’m surprised it took firms so long to latch onto and use the technology, it doesn’t surprise me that SAS is one of the first to openly push this.

Another advantage brought by an older, global firm, related to the parsing is that it works in multiple languages, including right-to-left languages such as Hebrew and Arabic. Most startups focus on their own national language and it can be a while before the applications are truly global. SAS already knows the importance and supports the need.

Great, But Not Yet End-To-End

The only big marketing mistake I heard was towards the end. While Frost and Thompson are rightfully proud of their products, Wayne Thompson crowed that “We’re not XXX,” a reference to a major BI player, “We’re end-to-end.” However, they’d showed only minimal visualization choices and their collaboration, admittedly isn’t there.

Even worse for the message, only a few minutes later, based on a question, one of the presenters shows how you export predicted values so that visualization tools with more power can help display the information to business management.

I have yet to see a real end-to-end tool and there’s no reason for SAS to push this iteration as more than it is. It’s great, but it’s not yet a complete solution.

Summary

SAS is making a strong push into the front end of analytics and business intelligence. They are busy wrapping tools around their statistical engines that will allow them to move much more strongly out of academics and the very technical depths of life sciences, manufacturing, defense and other industries to challenge in the realm of BI.

They’re headed in the right direction, but the risk mentioned at the start remains. Will they keep focused on this growing market and the changes it requires, or will that large R&D expenditure focus on the existing strengths and make the BI transition too slow? I’m seeing all the right signs, they just need to stay on track.

TDWI Webinar Review: Claudia Imhoff and SAP with an overview of the analytics supply chain

Tuesday’s TDWI webinar had a guest star: Claudia Imhoff. The topic was predictive analytics and the presentation was sponsored by SAP, so Pierre Leroux, Director of Product Marketing, SAP, also had his moment towards the end. Though the title was about predictive analytics, it’s best to view the presentation as an overview of the state of analytics, and there’s much more to discuss on that.

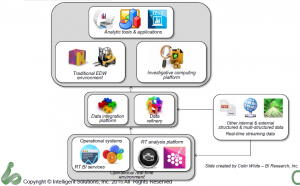

The key points revolved around a descriptive slide Ms. Imhoff presented to describe the changing analytics landscape.

Claudia Imhoff described the established EDW information supply chain as being the left half of the diagram while the newer information, with web, internet of things (IOT) and other massive data sources adding the right hand side. It’s a nice, clean way of looking at things and makes clear that the newer data can still drive rather than eliminate the EDW.

One thing I’d say is missing is a good name for the middle box. Many folks call was Ms. Imhoff terms the Date Refinery a Data Lake or other similar rationalizations. My issue is that there’s really no need to list the two parts separate. In fact, there’s a need to have them seamlessly accessible as a whole, hence the growth of SQL for Hadoop and other solutions. As I’ve expressed before, the combination of the data integration and data refinery displayed are just the next generation of the ODS. I like the data refinery label, but think it more accurately applies to the full set of data described in the middle section of the diagram.

Claudia also described, the four types of analytics:

- Descriptive: What happened.

- Diagnostic: Why it happened.

- Predictive: What might happen.

- Prescriptive: What to do when it happens.

It’s important to understand the difference in analysis because each type of report needs to have a focus and an audience. One nit I have with her discussion of these was the comment that descriptive analytics are the least valuable. Rather, they’re the least strategic. If we don’t know what happened, we can’t feed the other types of analytics, plus, reporting requirements in so much of business means that understanding and reporting what happened remains very valuable. The difference is not how valuable, but in what way. Predictive and prescriptive analytics can be more valuable in the long term, but their foundation still resides on descriptive.

Not more with the Data Scientist…

My biggest complaint with our industry at large is still the obsession with the mythical data scientist. Claudia Imhoff spent a good amount of time on the subject. It’s a concept with super human requirements, with Claudia even saying that the data scientist might be the one with deep business knowledge. Nope. Not going to happen.

In Q&A, somebody brought up the point I always mention: Why does it have to be one person rather than a team. Both Claudia Imhoff and Pierre Leroux admitted that was more likely. I wish folks would start with that as it’s reasonable and logical.

I was a programmer as folks began calling themselves software engineers. I never liked that. The job wasn’t engineering but a blend of engineering and crafting. There was art. The two presenters continued to talk about the data scientist as having an art component, but still think that means the magical person is still a scientist. In addition, thirty year ago the developer was distanced much further from business, by development methods, technology and business practice. Being closer means, again, teamwork, with each person sharing expertise in math, coding, business and more to create a robust solution.

That wall has been coming down for years, but both technology and business are changing rapidly and are far more complex. The team notion is far more logical.

Business and Technology

The other major problem I had was a later slide and words accompanying it that implied it’s up to the business people to get on board with what the technologists are doing. They must find the training, they must learn that analytics are the answer to everything.

Yes, we’re able to provide better analytics faster to management than in the past. However, they’re not yet perfect nor will they be. Models are just that. As Pierre pointed out, models will never explain 100%.

Claudia made a great point earlier about one of the benefits of big data is to eliminate sampling and look at what the entire market is doing, but markets are still complex and we can’t glean everything. Technologists must get of the high horse and realize that some of the pushback from management is because the techies too often tend to dismiss intuition and experience. What needs to happen is for the messages to change to make it clear that modern analytics will help executives and line management make better decisions, not that it will replace their decision making.

In addition, quit making overly complex visualization that have great scientific relevance but waste time. The users do not need to understand the complexities of systems. If we’re so darned smart, we can distill the visualizations to things easier to comprehend so that managers can get the information, add it to all the other information and experience and make decision.

Technologists must adapt to how business runs as much as business must adapt to leverage technology.

Summary

The title of the presentation misrepresents the content. It was a very good presentation for understanding the high level landscape of the analytics information supply chain and it’s a discussion that needs to be held more often.

You’ll notice I didn’t say much about the demo by Pierre Leroux. That’s because of technical issues between demo and webinar software. However, both he and Claudia Imhoff took questions about the industry and market and gave thoughtful answers that should help drive the conversation forward.

IBM and the Cloud? Don’t write it off

At today’s investor meeting, IBM execs announced a target of $40 billion in revenue for cloud, analytics, mobile, social and security software by 2018. I’ve expect to see folks talk about dinosaurs not being able to turn fast enough and predicting failure to meet that goal. I don’t know if they can do it, but to make such ardent predictions you’d have to ignore history.

Mid-sized Unix servers came along and folks talked about IBM going away.

IBM blew a chance to own PC industry and the same predictions followed them.

Linux? Freeware was going to destroy the mainframe. Oops, Linux partitions run on mainframes.

Now we know the large growth of the cloud. Much of it has been on commodity boxes. However, as data gets larger, analytics more powerful and networks become more robust, there’s clearly space for a company with such a strong history in hardware, services and adapting to changes.

After all, too many people still think of IBM as a hardware company. While it’s too early for the 2014 report, you can check the 2013 Annual Report and check page 7. Look at what a tiny percentage of the bar is hardware. Software and services are fairly even in splitting the vast majority of the revenue stream.

It’s a strong goal and will take a lot of pushing. How many politely phrased “re-orgs” will happen to lay off staff? Who knows? Will they succeed? No clue. All I expect is that they’ll continue to grow and nobody should count them out.