In a move joining the children of two famous early tech companies, Hewlett Packard Enterprise (HPE) announced they are going to acquire Cray Inc. It’s a smart move, but it brings a bit of nostalgia to my emotions. Read my latest Forbes.com article.

Tag Archives: big data

Data ingestion or data indigestion ?

Data Lakes, the renamed ODS, aren’t the only solution for accessing data. Think actual need, understand supporting metadata, then build your data ingestion plan. Read my latest TechTarget column.

Meshing Governance and Empowerment

My new white paper is available on the Jinfonet web site.

Webinar Review: Oracle Big Data Cloud, Understanding Business

People at technology startups love to call the industry giants dinosaurs. The analogy fails for a number of reasons. The funniest is that the dinosaurs existed for many millions of years. As the large companies exist now, are the startups are saying the big companies will only disappear if we’re hit by a meteor? Companies became large by filling a need. While many might not be as nimble, their experience, especially in enterprise software, means they often see the needs of the business community while the small companies are focused too much on their “cool” technology.

This week’s Oracle webinar, hosted by the DBTA, was a good example of that. The speakers were Rich Clayton, VP Business Analytics Product Group, and Omri Traub, VP Software Development, and the subject was, no surprise, Oracle Big Data Cloud Service (OBDC. Yeah, I know. Too close to ODBC…). Before we get into the details, people need to be aware that Oracle is fully committed to the cloud, as pointed out in a recent advertorial in Forbes. Oracle is clearly competing with Amazon for enterprise cloud business. Big data is only one part of that.

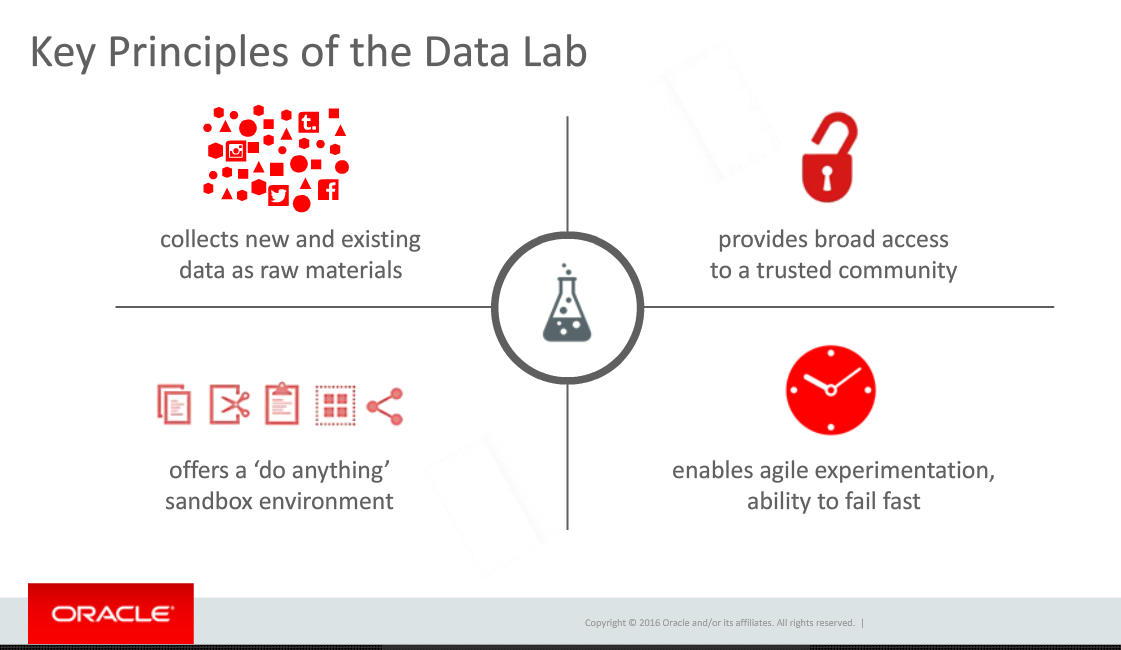

Rich Clayton began the presentation by pointing towards Thomas Edison’s laboratory as an example of using the ideas from many people to not only invent things but also to figure out how to market those inventions. He brought that directly into the evolution of corporate data labs. The biggest problem, Rich stated, is that that labs are usually only populated by very technical people while they require a broader array of talents. That requirement is one of the data labs principles he defined and one I’ve also described as the missing component of many corporate data labs.

A related problem is that most products are so complex and silo’d that very technical people are needed. At this stage in business intelligence and big data, that’s the horse that needs to be addressed before the broad access cart can move.

Omri Traub then took over for the demonstration portion of the presentation. Unfortunately, he unintentionally proved the point about technical folks missing business needs by the setup he used for the demonstration. The demo was built around an enormous amount of information on New York City taxi information. While manipulating a billion record data set is cool and powerful, he never presented a business message. He pointed to the large volume of data, talked about other data sources he combined, and then played with the data to show correlations.

The problem? Omri, claimed we were gaining insight. Correlations aren’t insight. Understanding how those correlations might impact your business and ideas how to adapt business to meet what you find is insight. Nothing in the demonstration pointed towards insight.

Fortunately, Rich Clayton earlier had given a couple of case studies showing business insight gained by OBDC early customers. It would have been much better if Mr. Traub had focused on one of those cases or something similar.

The best point of the demonstration was when Omri showed how, in the middle of playing with some relationships, he easily incorporated some analysis created by a different person. As mentioned above, collaboration is critical and it looks like Oracle hasn’t limited that to just a marketing message but has worked to make sure that Oracle’s product helps the team. As many companies claim to do that and it was only an overview, your mileage might vary. Make sure when you talk to them to follow through and see whether the collaboration (not to mention the entire product…) meets your needs.

The final section was the Q&A. I’m a marketing person, so I have to be honest and state that it sounded like canned questions they wanted to address, as there was way too much about the full Oracle ecosystem brought into discussion at this point compared to what I’d expect from customers. Still, there was one important point.

A question was asked about what advanced analytics might be added. Mr. Taub had the perfect response. After quickly mentioning that, yes, Oracle was always looking at advanced analytics and how to add them, he made a much more important point. Collobaration is key and OBDC is designed to get business people involved. All analytics need to be added in a usable manner, in a way that is understandable and can be leveraged by more people than just the technical resources.

That is the critical viewpoint that a large, enterprise focused company can bring to BI, the cloud and big data. That’s why it’s foolish to write off the large companies, the ones with expertise in not just technology, but in business and business relationships. They might not move as fast, but they can move to the right places with the right products and the right business messages.

DBTA Webinar Review: Leveraging Big Data with Hadoop, NoSQL and RDBMS

A presentation last week, hosted by Database Trends and Applications (DBTA), was a great example of some interesting technical information presented poorly. As that sentence implies, this column is one about the marketing of business intelligence (BI), not about the technology – well, not much…

There were three presenters: Brian Bulkowski, CTO and Co-founder, Aerospike; Kevin Petrie, Senior Director and Technology Evangelist, Attunity; Reiner Kappenberger, Global Product Management, HPE Security – Data Security.

Aerospike

Brian was first at the podium. Aerospike is a company providing what they claim is a very high speed, scalable database, proudly advertising “NoSQL!” The problem they have is that they are one of many companies still confused about the difference between databases and SQL. A database is not the access method. What they’re really focused on in loosely structured data, the same way Hadoop and other newer databases are aimed. That doesn’t obviate the need to communicate via SQL.

He also said that the operational in-memory market is “owned by NoSQL.” However, there were no numbers. Standard RDBMS’s, columnar and NoSQL databases all are providing in-memory storage and processing. In fact, Information Management has a slide show of Gartner’s database analytics vendor report and you can see the breadth there. In addition, what I constantly hear (not statistically significant either…) is that Hadoop and other loosely-structured databases are still primarily for batch. However, as the slide show I just mentioned is in alphabetical order, and Aerospike is the first one you’ll see. Note again that I’m pointing out flaws in the marketing message, not the products. They could have a great in-memory solution, but that’s doesn’t mean NoSQL is the only NoSQL option.

The final key marketing issue is that he kept misusing “transactional.” He continued to talk about RDMS’s as transactional systems even while he talked about the power of Aerospike for better handling the transactions. In the later portion of his presentation, he was trying to say that RDBMS’s still had a place, but he was using the wrong term.

Attunity

Attunity’s Kevin Petrie was second and his focus was on Attunity Replicate. The team of Aerospike and Attunity again shows the market isn’t yet mature enough to have ETL and databases come smoothly together. Kevin talked about their 35 sources and it seem that they are the front end in the marketing paring of the two companies. If you really need heterogeneous data sources and large database manipulation, you’ll need to look at the pair of companies.

My key issue with this section was one of enterprise priorities. Perhaps the one big, anonymous reference they both discussed drove the webinar, but it shouldn’t have owned the message. Mr. Petrie spent almost all his time talking about Hadoop, MongoDB and Kafka. Those are still bleeding edge tools while enterprise adoption requires a focus on integrating with standard and existing sources. Only at the end, his third anonymous case, did Kevin have a slide that mentioned RDBMS sources. If he wants to keep talking with people running experimental and leading edge tests of systems, that priority makes sense. If he wishes to talk to the larger enterprise market, he needs to turn things around.

The other issue was a slide that equated RDBMS, Data Warehouse and Hadoop as being on equal footing. There he shows a lack of business knowledge. The EDW, as an old TV would declare, is the one of these things that is not like the other. It has a very different purpose from the two database technologies and isn’t technology dependent.

HPE Security

Reiner Kappenberger gave a great presentation but it didn’t belong. It seems the smaller two firms were happy to get HP to help with the financing but they didn’t think about staying on message.

Let me make it very clear: Security is of critical importance. What Mr. Kappenberger had to say was very important for people to hear. However, it didn’t belong in this webinar. The topic didn’t fit and working to stuff three presenters into forty minutes is always tough. Another presentation where all three talked about how they work to ensure that the large volumes of data can be secure at multiple levels would have been great to hear – and I hope the three choose to create such a webinar.

Summary

This was two different webinars stuffed into one, blurring the message. In addition, Aerospike and Affinity either need to make sure they they’re not yet trying to address the mass market or they need to learn how to stop speaking to each other and other leading edge people and begin to better address the wider enterprise market.

The unnamed reference seemed to be a company that needed help with credit card transactions and fraud detection, and all three companies worked to provide a full solution. However, from a marketing standpoint I don’t think they did proper service to their project by this webinar.

TDWI & Teradata: An overview of data-centric security

Yesterday’s TDWI webinar was focused on data-centric security. The tag team was Fern Halper, Research Director for Advanced Analytics, TDWI, and Jay Irwin, Director of InfoSec, Teradata. It’s always nice when the two halves of a sponsored presentation fit well. For that reason and for the content, this was a nice presentation.

Everyone in the industry knows that data breeches happen, and we all talk about the issue. I’ve seen a few articles and lists about the number of successful attacks, but Fern Halper pointed us to a nice graphic from Information is Beautiful. She also pointed to another study that showed that “In 2013, 33% of respondents said their company had a data breach. In 2014 the percentage has increased to 43%.” It’s always a race between black hats and white hats, so it’s important to minimize not only your chance of getting hacked, but also to minimize the importance and usefulness of data gained from successful hacks.

Ms. Halper than discussed four types of data security:

- Perimeter security: monitoring network access for intrusion detection.

- Authorization and Access: Password and role based data protections.

- Encryption: Using cryptography to encode data.

- Logging and monitoring: Analyzing access patterns.

Each part is necessary but insufficient. Authorization is only as strong as people’s passwords. If it’s easy to steal the encryption key, encryption doesn’t matter. A robust security system leverages all the types.

One important note: Later in her presentation and throughout Jay Irwin’s section, encryption didn’t exist alone but alongside tokenization. The later is a different security technology, where characters, words, numbers and fields are replaced with other symbols, or tokens, that still look as if they’re real and can still be used in analysis. Mr. Irwin pointed out he prefers “data protection” as a rubric that covers all the techniques of data level security.

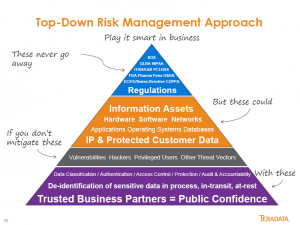

Along with that clarification, Jay Irwin also described the multiple layers as “Defense in Depth,” a concentric ring of security to ensure there’s no single point of failure. Jay also provided my favorite slide of the presentation. While it’s too wordy, it’s a pretty clear view of Teradata’s top-down approach.

An organization must start with understanding the rules and regulations that drive data security. Only then can you identify the data assets that need special attention in order to protect them from hackers.

Jay has a lot more to say in a lot more detail, and I won’t cover it all. While I blog about webinars so you don’t have to watch, this one’s an exception. If you want to get a good, broad view of core data security issues, take some time and listen to the webinar.

TDWI Webinar Review: Fast Decision Making with Analytics

This is more of a marketing flavored post as the recent presentation seemed to miss its own point. The title implied it was about fast decision making, but Fern Halper, TDWI Research Director for Advanced Analytics, gave a rather generic presentation about the importance of operationalizing analytics.

Fern gave a nice presentation about operationalizing analytics, but it was not significantly different than her last few. In addition, some of the survey issues discussed were clearly not well thought out. For instance, Ms. Halper listed the expected growth of predictive analytics and web/mobile analytics as if they belonged in the same discussion. The fact that web and mobile are methods of display doesn’t overlap with whether they are used to display descriptive or prescriptive analytics. The growth of those display methods also don’t move away from the use of dashboards in CRM and ERP applications, as was implied, since those applications will migrate views to the new display methods.

The best thing mentioned by both Fern Halper and the SAP presenters was the fact that there were multiple references to that need for multiple data sources. Seeing the continued refocusing of many firms on wide data rather than big data is a good thing for the industry. Big data is more of a technical issue while wide data more directly addresses complex business environments.

Now I’m hoping for more people to begin to refer to loosely structured data rather than unstructured data. Linguists, I’m sure, are constantly amused at hearing languages referred to as unstructured.

The case study was by Raj Rathee, Director, Product Management, SAP. It was an interesting project at Lufthansa, where real-time analytics were used to track flight paths and suggest alternative routes based on weather and other issues. The business key is that costs were displayed for alternate routes, helping the decision makers integrate cost and other issues as situations occur. However, that was really the only discussion of fast decision making with analytics.

The final marketing note is that the Q&A was canned but the answers didn’t always sync up. For instance, the moderator asked one question of Fern, she had a good answer, but there was no slide in the pack about her response, just the canned SAP slide referenced by Ashish Sahu, Director, Product Marketing, SAP, after Ms. Halper spoke.

I think the problem was that the presenters didn’t focus down on a tight enough message and tried to dump too much information into the presentation. The message got lost.

Diyotta: Data integration for the enterprise

I’m still catching up and reviewed a video of last month’s Diyotta presentation to the BBBT. The company is another young, founded in 2011, data integration company working to take advantage of current technologies to provide not just better data integration but also better change management of modern data infrastructures. In many ways, they’re similar to another company, WhereScape, which I discussed last year. Both are young and small, while the market is large and the need is great.

The presentation was given by Sanjay Vyas, CEO, and John Santaferraro, CMO. The introduction by Sanjay was one of the best from a small company founder that I’ve seen in a long time. He gave a brief overview of the company, its size, it’s global structure (with HQ in Charlotte, NC, and two offshore development centers). Then he went straight to what most small companies leave for last: He presented a case study.

My biggest B2B marketing point is that you need to let the market know you understand it. Far too many technical founders spend their time talking about the technology they built to solve a business problem, not the business problem that was addressed by technology. Mr. Vyas went to the heart of the matter. He showed the pain in a company, the solution and, most importantly, the benefits. That is what succeeds in business.

It also wasn’t an anonymous reference, it was Scotiabank, a leading Canadian bank with a global presence. When a company that large gives a named reference to a startup as small as is Diyotta, you know the firm is happy.

John Santaferraro then took over for a bit with mostly positive impact. While he began by claiming a young product was mature because it’s version 3.5, no four year old firm still working on angel investments has a fully mature product. From the case study and what was demo’d later, it’s a great product but it’s clear it’s still early and needs work. There’s no need to oversell.

The three main markets John said Diyotta aims at are:

- Big data analytics.

- Data warehouse modernization.

- Hybrid data integration including cloud and on-premises (though John was another marketing speaker who didn’t want to use the “s” at the end).

While the other two are important, I think it’s the middle one that’s the sweet spot. They focus on metadata to abstract business knowledge of sources and targets. While many IT organizations are experimenting with Hadoop and big data, getting a better understanding and improved control over the entire EDW and data infrastructure as big data is added and new mainline techniques arrive is where a lot more immediate pain exists.

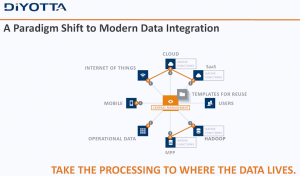

Another marketing miss that could have incorporated that key point was when Mr. Santaferrero said that the old ETL methods no longer work because “having a server in the middle of it … doesn’t exist anymore.” The very next slide was as follows.

Diyotta still seems to have a server in the middle, managing the communications between sources and targets through metadata abstraction. The little “A’s” in the data extremities are agents Diyotta uses to preprocess requests locally to optimize what can be optimizes natively, but they’re still managed by a central system.

The message would be more powerful by explaining that the central server is mediating between sources and targets, using metadata, machine learning and other modern tools, to appropriately allocate processing at source, in the engine or in the target in the most optimal way.

While there’s power in the agents, that technology has been used in other aspects of software with mixed results. One concern is that it means a high need for very close partnerships with the systems in which the agents reside. While nobody attending the live presentation asked about that, it’s a risk. The reason Sanjay and John kept talking about Netezza, Oracle and Teradata is because those are the firms whose products Diyotta has created agents. Yes, open systems such as Hadoop and Spark are also covered, but agents do limit a small company’s ability to address a variety of enterprises. The company is still small, so as long as they focus on firms with similar setups to Scotiabank, they have time to grow, to add more agents and widen their access to sources; but it’s something that should be watched.

On the pricing front, they use pricing purely based on the hub. There’s no per user or per connector pricing. As someone who worked for companies that used pricing that involved connectors, I say bravo! As Mr. Vyas pointed out, their advantage is how they manage sources and targets, not which ones you want them to access. While connecting is necessary, it’s not the value add. The pricing simplifies things and can save money compared with many more complex pricing schemes that charge for parts.

The final business point concerns compliance. An analyst in the room (Sorry, I didn’t catch the name) asked about Sarbanes-Oxley. The answer was that they don’t yet directly address compliance but their metadata will make it easier. For a company that focuses on metadata and whose main reference site is a major financial institution, it would serve their business to add something to explicitly address compliance.

Summary

Diyotta is a young company addressing how enterprises can leverage big data as target and source alongside the existing infrastructure through better metadata management and data access. They are young and have many of the plusses and minuses that involves. They have some great technology but it’s early and they’re still trying to figure out how to address what market.

The one major advantage they have, given what I’ve seen in only a two hour presentation, is Sanjay Vyas. Don’t judge a startup on where they are now or where you think they need to be. Judge them on whether or not management seems capable of getting from point A to point B. Listening to Mr. Vyas, I heard a founder who understands both business and technology and will drive them in the direction they need to go.

IBM, you BM, we all BM for … Spark!

IBM at BBBT

A recent presentation by IBM at the BBBT was interesting. As usual, it was more interesting to me for the business information than the details. As unusual, they did a great job in a balanced presentation covering both. While many presentations lean too heavily in one direction or the other, this one covered both sides very well.

The main presenter was Harriet Fryman, VP of Marketing, IBM Analytics Platform. Adding information during the presentation were Steven Sit, Director of Product Management, Open Source Based Analytics Systems, and Steve Beier, Program Director, Spark Technology Center.

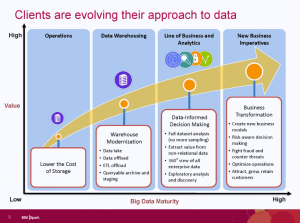

The focus of the talk was IBM’s commitment to Apache Spark. Before diving deep into the support, Ms. Fryman began by talking about business’ evolving data needs. Her key point is that “we all do data hording,” that modern technologies are allowing us to horde far more data than ever before, and that better ways are needed to get value out of the data.

She then proceeded to define three key aspects of the growth in analytics:

- Applying analytics in more parts of business.

- Understand the time value of data.

- The growth of machine learning and cognitive systems.

The second two overlap, as the ability to analyze large volumes of data in near real-time means a need to have systems do more analysis. The following slide also added to IBM’s picture of the changing focus on higher level information and analytics.

The presentation did go off on a tangent as some analysts overthought the differences in the different IBM groups for analytics and for Watson. Harriet showed great patience in saying they overlap, different people start with different things and internal organizational structures don’t impact IBM’s ability to leverage both.

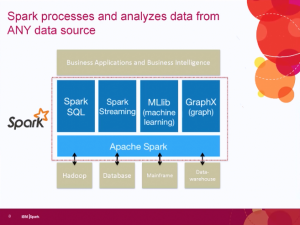

The focus then turned back to Spark, which IBM sees as the unifying layer for data access. One key issue related to that is the Spark v Hadoop debate. Some people seem to think that Spark will replace Hadoop, but the IBM team expressed clear disagreement. Spark is access while Hadoop is one data structure. While Hadoop can allow for direct batch processing of large jobs, using Spark on top of Hadoop allows much more real time processing of the information that Hadoop appropriately contains.

One thing on the slide that wasn’t mentioned but links up with messages from other firms, messages which I’ve supported, is that one key component, in the upper left hand corner of the slide, is Spark SQL. Early Hadoop players were talking about no-SQL, but people are continuing to accept that SQL isn’t going anywhere.

Well, most people. At least fifteen minutes after this slide was presented, an attending analyst asked about why IBM’s description of Spark seemed to be similar to the way they talk about SQL. All three IBM’ers quickly popped up with the clear fact that the same concepts drive both.

While the team continued to discuss Spark as a key business imitative, Claudia Imhoff asked a key question on the minds of anyone who noticed huge IBM going to open source: What’s in it for them? Harriet Fryman responded that IBM sees the future of Spark and to leverage it properly for its own business it needed to be part of the community, hence moving SystemML to open source. Spark may be open source, but the breadth and skills of IBM mean that value added applications can be layered on top of it to continue the revenue stream.

Much more detail was then stated and demonstrated about Spark, but I’ll leave that to the more technical analysts and vendor who can help you.

One final note put here so it didn’t distract from the main message or clutter the summary. Harriet, please. You’re a great expert and a top marketing person. However, when you say “premise” instead of “premises,” as you did multiple times, it distracts greatly from making a clear marking message about the cloud.

Summary

IBM sees the future of data access to be Apache Spark. Its analytics group is making strides to open not only align with open source, but to be an involved player to help the evolution of Spark’s data access. To ignore IBM’s combined strength in understanding enterprise business, software and services is to not understand that it is a major player in some of the key big data changes happening today. The IBM Spark initiative isn’t a marketing ploy, it’s real. The presentation showed a combination of clear business thought and strategy alongside strong technical implementation.

Webinar review: TDWI on Streaming Data in Real Time, in Memory

The Internet of Things (IOT) is something more and more people are considering. Wednesday’s TDWI webinar topic was “Stream Processing: Streaming Data in Real Time, in Memory,” and the event was sponsored by both SAP and Intel. Nobody from Intel took part in the presentation. Given my other recent post about too many cooks, that’s probably a good thing, but there was never a clear reason expressed for Intel’s sponsorship.

Fern Halper began with overview of how TDWI is seeing data streaming progress. She briefly described streaming as dealing with data while still in motion, as opposed to data in warehouses and other static structures. Ms. Halper then proceeded to discuss the overlap between event processing, complex event processing and stream mining. The issue I had is that she should have spent a bit more time discussing those three terms, as they’re a bit fuzzy to many. Most importantly, what’s the difference between the first two?

The primary difference is that complex event processing is when data comes from multiple sources. Some of the same things are necessary as ETL. That’s why the in-memory message was important in the presentation. You have to quickly identify, select and merge data from multiple streams and in-memory is the way to most efficiently accomplish that.

Ms. Halper presented the survey results about the growth of streaming sources. As expected, it shows strong growth should continue. I was a bit amused that it asked about three categories: real-time event streams, IOT and machine data. While might make sense to ask the different terms, as people are using multiple words, they’re really the same thing. The IoT is about connecting things, which interprets as machines. In addition, the main complex events discussed were medical and oil industry monitoring, with data coming from machines.

Jaan Leemet, Sr. VP, Technology, at Tangoe then took over. Tangoe is an SAP customer providing software and services to improve their IT expense management. Part of that is the ability to track and control network usage of computers, phones and other devices, link that usage to carrier billing and provide better cost control.

A key component of their needs isn’t just that they need stream processing, but that they need stream processing that also works with other less dynamic data to provide a full solution. That’s why they picked SAP’s Even Stream Processor – not only for the independent functionality but because it also fits in with their SAP ecosystem.

One other decision factor is important to point out, given the message Hadoop and other no-SQL folks like to give. SAP’s solution works in a SQL-like language. SQL is what IT and business analysts know, the smart bet for rapid adoption is to understand that and do what SAP did. Understand the customer and sales becomes easier. That shouldn’t be a shock, but technologists are often too enamored of themselves to notice.

Neil McGovern, Sr. Director, Marketing, at SAP gave the expected pitch. It was smart of them to have Jaan Leemet go first and it would have been better if Mr. McGovern’s presentation was even shorter so there would have been more time for questions.

Because of the three presenters, there wasn’t time for many questions. One of the few question for the panel asked if there was such a thing as too much data. Neil McGovern and Jaan Leemet spent time talking about the technology of handling lots of streaming data, but only in generalities.

Fern Halper turned it around and talked about the business concept of too much data. What data needs to be seen at what timeframe? What’s real-time? Those have different answers depending on the business need. Even with the large volume of real-time data that can be streamed and accesses, we’re talking about clustered servers, often from a cloud partner, and there’s no need to spend more money on infrastructure than necessary.

I would have liked to have heard a far more in-depth discussion about how to look at a business and decide which information truly requires streaming analysis and which doesn’t. For instance, think about a manufacturing floor. You want to quickly analyze any data that might indicate failures that would shut down the process, but the volumes of information that allow analysis of potential process improvements don’t need to be analyzed in the stream. That can be done through analysis of a resultant data store. Yet all the information can be coming across the same IoT feed because it’s a complex process. Firms need to understand their information priority and not waste time and money analyzing information in a stream for no purpose other than you can.