Data virtualization. What is it? A few companies have picked up the term and run with it, including last week’s BBBT presenter Denodo. The presentation team was Suresh Chandrasekaran, Sr. VP, North America, Paul Moxon, Sr. Director, Product Management & Solution Architecture, and Pablo Alvarez, Sales Engineer. Still, what I’ve not seen is a clear definition of the phrase. The Denodo team did a good job describing their successes and some features that help that, but they do avoiding a clear definition.

Data Virtualization

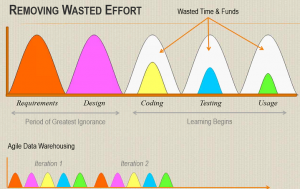

The companies doing data virtualization are working to create a virtual data structure where the logical definitions link back to disparate live systems instead of overlaying a single aggregated database of information. It’s the concept of a federated data warehouse from the 1990s, extended past the warehouse and now more functional because of technology improvements.

Data virtualization (and note that, sadly, I don’t create an acronym because DV is also data visualization and who needs the confusion. So more typing…) is sometimes thought of as a way to avoid data warehouses by people who hear about it at a high level, but as the Denodo team repeatedly pointed out, that’s not the case. Virtualization can simplify and speed some types of analysis, but the need for aggregated data stores isn’t going away.

The biggest problem with virtualization for everything is operational systems not being able to handle the performance hits of lots of queries. A second is that operational systems don’t typically track historical information needed for business analysis. Another is that very static data in multiple systems that’s accessed frequently can create an unnecessary load on today’s busier and busier networks. Consolidating information can simplify and speed access. Another is that change management becomes a major issue, with changes to one small system potentially causing changes to many systems and reports. There are others, but they in no way undermine the value that is virtualization.

As Pablo Alvarez discussed, virtualization and a warehouse can work well together to help companies blend data of different latencies, with virtualization bringing in dynamic data to mesh with historic and dimensional information to provide the big picture.

Denodo

Denodo seems to have a very good product for virtualization. However, as I keep pointing out when listening to the smaller companies, they haven’t yet meshed their high level ideas about virtualization and their products into a clear message. The supposed marketechture slide presented by Suresh Chandrasekaran was very technical, not strategic. Where he really made a point was in discussing what makes a Denodo pitch successful.

Mr. Chandrasekaran states that pure business intelligence (BI) sales are a weak pitch for data virtualization and that a broader data need is where the value is seen by IT. That makes absolute sense as the blend between BI and real-time is just starting and BI tends to look at longer latency data. It’s the firms that are accessing a lot of disparate systems for all types of productivity and business analysis past the focus on BI who want to get to those disparate systems as easily as possible. That’s Denodo’s sweet spot.

While their high level message isn’t yet clarified or meshed with markets and products, their product marketing seems to be right on track. They’ve created a very nicely scaled product

Denodo Express is free version of their platform. Paul Moxon stated that it’s fully functional, but it can’t be clustered, has a limitation of result set size and can’t access certain data sources. However, it’s a great way for prospects to look at the functionality of the product and to build a proof-of-concept. The other great idea is that Denodo gives Express users a fixed time pricing offer for enterprise licensing. While not providing numbers, Suresh stated that the offer was working well as an incentive for the freeware to not be shelfware, for prospects to test and move down the sales funnel. To be blunt, I think that’s a great model.

One area they know is a weakness is in services, both professional services and support. That’s always an issue with a rapidly growing company and it’s good to see Denodo acknowledge that and talk about how they’re working to mitigate issues. The team said there are plans to expand their capital base next year, and I’d expect a chunk of that investment to go towards this area.

The final thing I’ll note specifically about Denodo’s presentation is their customer slides. That section had success stories presented by the customers, their own views. That was a strong way to show customer buy in but a weak way to show clear value. Each slide was very different, many were overly complex and most didn’t clearly show the value they achieved. It’s nice, but customer stories need to be better formalized.

Data Virtualization as a Market

As pointed out above, in the description of virtualization, it’s a very valuable tool. The market question is simple: Is that enough? There have been plenty of tools that eventually became part of a larger market or a feature in a larger product offering. What about data virtualization?

As the Denodo team seems to admit, data virtualization isn’t a market that can stand on its own. It must integrate with other data access, storage and provisioning systems to provide a whole to companies looking to better understand and manage their businesses. When there’s a new point solution, a tool, partnerships always work well early in the market. Denodo is doing a good job with partners to provide a robust solution to companies; but at some point bigger players don’t want to partner but to provide a complete solution.

That means data virtualization companies are going to need to spread into other areas or be acquired. Suresh Chandrasekaran thinks that data virtualization is now at the tipping point of acceptance. In my book, given how fast the software industry, in general, and data infrastructure markets, in particular, grow and evolve, that leaves a few years of very focused growth before the serious acquisitions happen – though I wouldn’t be surprised if it starts sooner. That means companies need to be looking both at near term details and long term changes to the industry.

When I asked about long term strategy, I got the typical startup answer: They’re focused on internal growth rather than acquisition (either direction). That’s a good external message because folks who want a leading edge company want it clear that they’re using a leading edge company, but I hope the internal conversations at the CxO level aren’t avoiding acquisition. That’s not a failure, just a different version of success.

Summary

Denodo is a strong technical company focused on data virtualization in the short run. They have a very nicely scaled model from Denodo Express to their full product. They seem to understand their sweet spot within IT organizations. Given that, any large organization looking to get better access to disparate sources of data should talk with Denodo as part of their evaluation process.

My only questions are in marketing messages and whether or not Denodo be able to change from a technical sales to a higher level, clearer vision that will help them cross the chasm. If not, I don’t think their product is going anywhere, someone will acquire them. Regardless, Denodo seems to be a strong choice to look at to address data access and integration issues.

Data virtualization is an important niche, the questions remain as to how large is the niche and how long it will remain independent.